Table of Contents

写在前面 #

上一篇文章中kubernetes系列教程(七)深入玩转pod调度介绍了kubernetes中Pod的调度机制,通过实战演练介绍Pod调度到node的几种方法:1. 通过nodeName固定选择调度,2. 通过nodeSelector定向选择调度,3. 通过node Affinity亲和力调度,接下来介绍kubernetes系列教程pod的健康检查机制。

1. 健康检查 #

1.1 健康检查概述 #

应用在运行过程中难免会出现错误,如程序异常,软件异常,硬件故障,网络故障等,kubernetes提供Health Check健康检查机制,当发现应用异常时会自动重启容器,将应用从service服务中剔除,保障应用的高可用性。k8s定义了三种探针Probe:

- readiness probes 准备就绪检查,通过readiness是否准备接受流量,准备完毕加入到endpoint,否则剔除

- liveness probes 在线检查机制,检查应用是否可用,如死锁,无法响应,异常时会自动重启容器

- startup probes 启动检查机制,应用一些启动缓慢的业务,避免业务长时间启动而被前面的探针kill掉

每种探测机制支持三种健康检查方法,分别是命令行exec,httpGet和tcpSocket,其中exec通用性最强,适用与大部分场景,tcpSocket适用于TCP业务,httpGet适用于web业务。

- exec 提供命令或shell的检测,在容器中执行命令检查,返回码为0健康,非0异常

- httpGet http协议探测,在容器中发送http请求,根据http返回码判断业务健康情况

- tcpSocket tcp协议探测,向容器发送tcp建立连接,能建立则说明正常

每种探测方法能支持几个相同的检查参数,用于设置控制检查时间:

- initialDelaySeconds 初始第一次探测间隔,用于应用启动的时间,防止应用还没启动而健康检查失败

- periodSeconds 检查间隔,多久执行probe检查,默认为10s

- timeoutSeconds 检查超时时长,探测应用timeout后为失败

- successThreshold 成功探测阈值,表示探测多少次为健康正常,默认探测1次

1.2 exec命令行健康检查 #

许多应用程序运行过程中无法检测到内部故障,如死锁,出现故障时通过重启业务可以恢复,kubernetes提供liveness在线健康检查机制,我们以exec为例,创建一个容器启动过程中创建一个文件/tmp/liveness-probe.log,10s后将其删除,定义liveness健康检查机制在容器中执行命令ls -l /tmp/liveness-probe.log,通过文件的返回码判断健康状态,如果返回码非0,暂停20s后kubelet会自动将该容器重启。

- 定义一个容器,启动时创建一个文件,健康检查时ls -l /tmp/liveness-probe.log返回码为0,健康检查正常,10s后将其删除,返回码为非0,健康检查异常

1[root@node-1 demo]# cat centos-exec-liveness-probe.yaml

2apiVersion: v1

3kind: Pod

4metadata:

5 name: exec-liveness-probe

6 annotations:

7 kubernetes.io/description: "exec-liveness-probe"

8spec:

9 containers:

10 - name: exec-liveness-probe

11 image: centos:latest

12 imagePullPolicy: IfNotPresent

13 args: #容器启动命令,生命周期为30s

14 - /bin/sh

15 - -c

16 - touch /tmp/liveness-probe.log && sleep 10 && rm -f /tmp/liveness-probe.log && sleep 20

17 livenessProbe:

18 exec: #健康检查机制,通过ls -l /tmp/liveness-probe.log返回码判断容器的健康状态

19 command:

20 - ls

21 - l

22 - /tmp/liveness-probe.log

23 initialDelaySeconds: 1

24 periodSeconds: 5

25 timeoutSeconds: 1

- 应用配置生成容器

1[root@node-1 demo]# kubectl apply -f centos-exec-liveness-probe.yaml

2pod/exec-liveness-probe created

- 查看容器的event日志,容器启动后,10s以内容器状态正常,11s开始执行liveness健康检查,检查异常,触发容器重启

1[root@node-1 demo]# kubectl describe pods exec-liveness-probe | tail

2Events:

3 Type Reason Age From Message

4 ---- ------ ---- ---- -------

5 Normal Scheduled 28s default-scheduler Successfully assigned default/exec-liveness-probe to node-3

6 Normal Pulled 27s kubelet, node-3 Container image "centos:latest" already present on machine

7 Normal Created 27s kubelet, node-3 Created container exec-liveness-probe

8 Normal Started 27s kubelet, node-3 Started container exec-liveness-probe

9 #容器已启动

10 Warning Unhealthy 20s (x2 over 25s) kubelet, node-3 Liveness probe failed: /tmp/liveness-probe.log

11ls: cannot access l: No such file or directory #执行健康检查,检查异常

12 Warning Unhealthy 15s kubelet, node-3 Liveness probe failed: ls: cannot access l: No such file or directory

13ls: cannot access /tmp/liveness-probe.log: No such file or directory

14 Normal Killing 15s kubelet, node-3 Container exec-liveness-probe failed liveness probe, will be restarted

15 #重启容器

- 查看容器重启次数,容器不停的执行,重启次数会响应增加,可以看到RESTARTS的次数在持续增加

1[root@node-1 demo]# kubectl get pods exec-liveness-probe

2NAME READY STATUS RESTARTS AGE

3exec-liveness-probe 1/1 Running 6 5m19s

1.3 httpGet健康检查 #

- httpGet probe主要主要用于web场景,通过向容器发送http请求,根据返回码判断容器的健康状态,返回码小于4xx即表示健康,如下定义一个nginx应用,通过探测http://:port/index.html的方式判断健康状态

1[root@node-1 demo]# cat nginx-httpGet-liveness-readiness.yaml

2apiVersion: v1

3kind: Pod

4metadata:

5 name: nginx-httpget-livess-readiness-probe

6 annotations:

7 kubernetes.io/description: "nginx-httpGet-livess-readiness-probe"

8spec:

9 containers:

10 - name: nginx-httpget-livess-readiness-probe

11 image: nginx:latest

12 ports:

13 - name: http-80-port

14 protocol: TCP

15 containerPort: 80

16 livenessProbe: #健康检查机制,通过httpGet实现实现检查

17 httpGet:

18 port: 80

19 scheme: HTTP

20 path: /index.html

21 initialDelaySeconds: 3

22 periodSeconds: 10

23 timeoutSeconds: 3

- 生成pod并查看健康状态

1[root@node-1 demo]# kubectl apply -f nginx-httpGet-liveness-readiness.yaml

2pod/nginx-httpget-livess-readiness-probe created

3[root@node-1 demo]# kubectl get pods nginx-httpget-livess-readiness-probe

4NAME READY STATUS RESTARTS AGE

5nginx-httpget-livess-readiness-probe 1/1 Running 0 6s

- 模拟故障,将pod中的path文件所属文件删除,此时发送http请求时会健康检查异常,会触发容器自动重启

1查询pod所属的节点

2[root@node-1 demo]# kubectl get pods nginx-httpget-livess-readiness-probe -o wide

3NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

4nginx-httpget-livess-readiness-probe 1/1 Running 1 3m9s 10.244.2.19 node-3 <none> <none>

5

6登录到pod中将文件删除

7[root@node-1 demo]# kubectl exec -it nginx-httpget-livess-readiness-probe /bin/bash

8root@nginx-httpget-livess-readiness-probe:/# ls -l /usr/share/nginx/html/index.html

9-rw-r--r-- 1 root root 612 Sep 24 14:49 /usr/share/nginx/html/index.html

10root@nginx-httpget-livess-readiness-probe:/# rm -f /usr/share/nginx/html/index.html

- 再次查看pod的列表,此时会RESTART的次数会增加1,表示重启重启过一次,AGE则多久前重启的时间

1[root@node-1 demo]# kubectl get pods nginx-httpget-livess-readiness-probe

2NAME READY STATUS RESTARTS AGE

3nginx-httpget-livess-readiness-probe 1/1 Running 1 4m22s

- 查看pod的详情,观察容器重启的情况,通过Liveness 检查容器出现404错误,触发重启。

1[root@node-1 demo]# kubectl describe pods nginx-httpget-livess-readiness-probe | tail

2Events:

3 Type Reason Age From Message

4 ---- ------ ---- ---- -------

5 Normal Scheduled 5m45s default-scheduler Successfully assigned default/nginx-httpget-livess-readiness-probe to node-3

6 Normal Pulling 3m29s (x2 over 5m45s) kubelet, node-3 Pulling image "nginx:latest"

7 Warning Unhealthy 3m29s (x3 over 3m49s) kubelet, node-3 Liveness probe failed: HTTP probe failed with statuscode: 404

8 Normal Killing 3m29s kubelet, node-3 Container nginx-httpget-livess-readiness-probe failed liveness probe, will be restarted

9 Normal Pulled 3m25s (x2 over 5m41s) kubelet, node-3 Successfully pulled image "nginx:latest"

10 Normal Created 3m25s (x2 over 5m40s) kubelet, node-3 Created container nginx-httpget-livess-readiness-probe

11 Normal Started 3m25s (x2 over 5m40s) kubelet, node-3 Started container nginx-httpget-livess-readiness-probe

1.4 tcpSocket健康检查 #

- tcpsocket健康检查适用于TCP业务,通过向指定容器建立一个tcp连接,可以建立连接则健康检查正常,否则健康检查异常,依旧以nignx为例使用tcp健康检查机制,探测80端口的连通性

1[root@node-1 demo]# cat nginx-tcp-liveness.yaml

2apiVersion: v1

3kind: Pod

4metadata:

5 name: nginx-tcp-liveness-probe

6 annotations:

7 kubernetes.io/description: "nginx-tcp-liveness-probe"

8spec:

9 containers:

10 - name: nginx-tcp-liveness-probe

11 image: nginx:latest

12 ports:

13 - name: http-80-port

14 protocol: TCP

15 containerPort: 80

16 livenessProbe: #健康检查为tcpSocket,探测TCP 80端口

17 tcpSocket:

18 port: 80

19 initialDelaySeconds: 3

20 periodSeconds: 10

21 timeoutSeconds: 3

- 应用配置创建容器

1[root@node-1 demo]# kubectl apply -f nginx-tcp-liveness.yaml

2pod/nginx-tcp-liveness-probe created

3

4[root@node-1 demo]# kubectl get pods nginx-tcp-liveness-probe

5NAME READY STATUS RESTARTS AGE

6nginx-tcp-liveness-probe 1/1 Running 0 6s

- 模拟故障,获取pod所属节点,登录到pod中,安装查看进程工具htop

1获取pod所在node

2[root@node-1 demo]# kubectl get pods nginx-tcp-liveness-probe -o wide

3NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

4nginx-tcp-liveness-probe 1/1 Running 0 99s 10.244.2.20 node-3 <none> <none>

5

6登录到pod中

7[root@node-1 demo]# kubectl exec -it nginx-httpget-livess-readiness-probe /bin/bash

8

9#执行apt-get update更新和apt-get install htop安装工具

10root@nginx-httpget-livess-readiness-probe:/# apt-get update

11Get:1 http://cdn-fastly.deb.debian.org/debian buster InRelease [122 kB]

12Get:2 http://security-cdn.debian.org/debian-security buster/updates InRelease [39.1 kB]

13Get:3 http://cdn-fastly.deb.debian.org/debian buster-updates InRelease [49.3 kB]

14Get:4 http://security-cdn.debian.org/debian-security buster/updates/main amd64 Packages [95.7 kB]

15Get:5 http://cdn-fastly.deb.debian.org/debian buster/main amd64 Packages [7899 kB]

16Get:6 http://cdn-fastly.deb.debian.org/debian buster-updates/main amd64 Packages [5792 B]

17Fetched 8210 kB in 3s (3094 kB/s)

18Reading package lists... Done

19root@nginx-httpget-livess-readiness-probe:/# apt-get install htop

20Reading package lists... Done

21Building dependency tree

22Reading state information... Done

23Suggested packages:

24 lsof strace

25The following NEW packages will be installed:

26 htop

270 upgraded, 1 newly installed, 0 to remove and 5 not upgraded.

28Need to get 92.8 kB of archives.

29After this operation, 230 kB of additional disk space will be used.

30Get:1 http://cdn-fastly.deb.debian.org/debian buster/main amd64 htop amd64 2.2.0-1+b1 [92.8 kB]

31Fetched 92.8 kB in 0s (221 kB/s)

32debconf: delaying package configuration, since apt-utils is not installed

33Selecting previously unselected package htop.

34(Reading database ... 7203 files and directories currently installed.)

35Preparing to unpack .../htop_2.2.0-1+b1_amd64.deb ...

36Unpacking htop (2.2.0-1+b1) ...

37Setting up htop (2.2.0-1+b1) ...

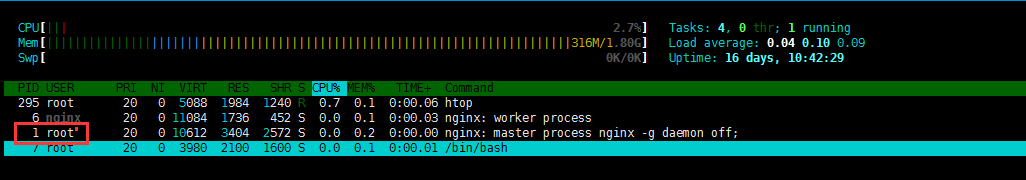

- 运行htop查看进程,容器进程通常为1

- kill掉进程观察容器状态,观察RESTART次数重启次数增加

1root@nginx-httpget-livess-readiness-probe:/# kill 1

2root@nginx-httpget-livess-readiness-probe:/# command terminated with exit code 137

3

4查看pod情况

5[root@node-1 demo]# kubectl get pods nginx-tcp-liveness-probe

6NAME READY STATUS RESTARTS AGE

7nginx-tcp-liveness-probe 1/1 Running 1 13m

- 查看容器详情,发现容器有重启的记录

1[root@node-1 demo]# kubectl describe pods nginx-tcp-liveness-probe | tail

2Tolerations: node.kubernetes.io/not-ready:NoExecute for 300s

3 node.kubernetes.io/unreachable:NoExecute for 300s

4Events:

5 Type Reason Age From Message

6 ---- ------ ---- ---- -------

7 Normal Scheduled 14m default-scheduler Successfully assigned default/nginx-tcp-liveness-probe to node-3

8 Normal Pulling 44s (x2 over 14m) kubelet, node-3 Pulling image "nginx:latest"

9 Normal Pulled 40s (x2 over 14m) kubelet, node-3 Successfully pulled image "nginx:latest"

10 Normal Created 40s (x2 over 14m) kubelet, node-3 Created container nginx-tcp-liveness-probe

11 Normal Started 40s (x2 over 14m) kubelet, node-3 Started container nginx-tcp-liveness-probe

1.5 readiness健康就绪 #

就绪检查用于应用接入到service的场景,用于判断应用是否已经就绪完毕,即是否可以接受外部转发的流量,健康检查正常则将pod加入到service的endpoints中,健康检查异常则从service的endpoints中删除,避免影响业务的访问。

- 创建一个pod,使用httpGet的健康检查机制,定义readiness就绪检查探针检查路径/test.html

1[root@node-1 demo]# cat httpget-liveness-readiness-probe.yaml

2apiVersion: v1

3kind: Pod

4metadata:

5 name: nginx-tcp-liveness-probe

6 annotations:

7 kubernetes.io/description: "nginx-tcp-liveness-probe"

8 labels: #需要定义labels,后面定义的service需要调用

9 app: nginx

10spec:

11 containers:

12 - name: nginx-tcp-liveness-probe

13 image: nginx:latest

14 ports:

15 - name: http-80-port

16 protocol: TCP

17 containerPort: 80

18 livenessProbe: #存活检查探针

19 httpGet:

20 port: 80

21 path: /index.html

22 scheme: HTTP

23 initialDelaySeconds: 3

24 periodSeconds: 10

25 timeoutSeconds: 3

26 readinessProbe: #就绪检查探针

27 httpGet:

28 port: 80

29 path: /test.html

30 scheme: HTTP

31 initialDelaySeconds: 3

32 periodSeconds: 10

33 timeoutSeconds: 3

- 定义一个service,将上述的pod加入到service中,注意使用上述定义的labels,app=nginx

1[root@node-1 demo]# cat nginx-service.yaml

2apiVersion: v1

3kind: Service

4metadata:

5 labels:

6 app: nginx

7 name: nginx-service

8spec:

9 ports:

10 - name: http

11 port: 80

12 protocol: TCP

13 targetPort: 80

14 selector:

15 app: nginx

16 type: ClusterIP

- 生成配置

1[root@node-1 demo]# kubectl apply -f httpget-liveness-readiness-probe.yaml

2pod/nginx-tcp-liveness-probe created

3[root@node-1 demo]# kubectl apply -f nginx-service.yaml

4service/nginx-service created

- 此时pod状态正常,此时readiness健康检查异常

1[root@node-1 ~]# kubectl get pods nginx-httpget-livess-readiness-probe

2NAME READY STATUS RESTARTS AGE

3nginx-httpget-livess-readiness-probe 1/1 Running 2 153m

4

5#readiness健康检查异常,404报错(最后一行)

6[root@node-1 demo]# kubectl describe pods nginx-tcp-liveness-probe | tail

7 node.kubernetes.io/unreachable:NoExecute for 300s

8Events:

9 Type Reason Age From Message

10 ---- ------ ---- ---- -------

11 Normal Scheduled 2m6s default-scheduler Successfully assigned default/nginx-tcp-liveness-probe to node-3

12 Normal Pulling 2m5s kubelet, node-3 Pulling image "nginx:latest"

13 Normal Pulled 2m1s kubelet, node-3 Successfully pulled image "nginx:latest"

14 Normal Created 2m1s kubelet, node-3 Created container nginx-tcp-liveness-probe

15 Normal Started 2m1s kubelet, node-3 Started container nginx-tcp-liveness-probe

16 Warning Unhealthy 2s (x12 over 112s) kubelet, node-3 Readiness probe failed: HTTP probe failed with statuscode: 404

- 查看services的endpoints,发现此时endpoints为空,因为readiness就绪检查异常,kubelet认为此时pod并未就绪,因此并未将其加入到endpoints中。

1[root@node-1 ~]# kubectl describe services nginx-service

2Name: nginx-service

3Namespace: default

4Labels: app=nginx

5Annotations: kubectl.kubernetes.io/last-applied-configuration:

6 {"apiVersion":"v1","kind":"Service","metadata":{"annotations":{},"labels":{"app":"nginx"},"name":"nginx-service","namespace":"default"},"s...

7Selector: app=nginx

8Type: ClusterIP

9IP: 10.110.54.40

10Port: http 80/TCP

11TargetPort: 80/TCP

12Endpoints: <none> #Endpoints对象为空

13Session Affinity: None

14Events: <none>

15

16#endpoints状态

17[root@node-1 demo]# kubectl describe endpoints nginx-service

18Name: nginx-service

19Namespace: default

20Labels: app=nginx

21Annotations: endpoints.kubernetes.io/last-change-trigger-time: 2019-09-30T14:27:37Z

22Subsets:

23 Addresses: <none>

24 NotReadyAddresses: 10.244.2.22 #pod处于NotReady状态

25 Ports:

26 Name Port Protocol

27 ---- ---- --------

28 http 80 TCP

29

30Events: <none>

- 进入到pod中手动创建网站文件,使readiness健康检查正常

1[root@node-1 ~]# kubectl exec -it nginx-httpget-livess-readiness-probe /bin/bash

2root@nginx-httpget-livess-readiness-probe:/# echo "readiness probe demo" >/usr/share/nginx/html/test.html

- 此时readiness健康检查正常,kubelet检测到pod就绪会将其加入到endpoints中

1健康检查正常

2[root@node-1 demo]# curl http://10.244.2.22/test.html

3

4查看endpoints情况

5readines[root@node-1 demo]# kubectl describe endpoints nginx-service

6Name: nginx-service

7Namespace: default

8Labels: app=nginx

9Annotations: endpoints.kubernetes.io/last-change-trigger-time: 2019-09-30T14:33:01Z

10Subsets:

11 Addresses: 10.244.2.22 #就绪地址,已从NotReady中提出,加入到正常的Address列表中

12 NotReadyAddresses: <none>

13 Ports:

14 Name Port Protocol

15 ---- ---- --------

16 http 80 TCP

17

18查看service状态

19[root@node-1 demo]# kubectl describe services nginx-service

20Name: nginx-service

21Namespace: default

22Labels: app=nginx

23Annotations: kubectl.kubernetes.io/last-applied-configuration:

24 {"apiVersion":"v1","kind":"Service","metadata":{"annotations":{},"labels":{"app":"nginx"},"name":"nginx-service","namespace":"default"},"s...

25Selector: app=nginx

26Type: ClusterIP

27IP: 10.110.54.40

28Port: http 80/TCP

29TargetPort: 80/TCP

30Endpoints: 10.244.2.22:80 #已和endpoints关联

31Session Affinity: None

32Events: <none>

- 同理,如果此时容器的健康检查异常,kubelet会自动将其动endpoint中

1删除站点信息,使健康检查异常

2[root@node-1 demo]# kubectl exec -it nginx-tcp-liveness-probe /bin/bash

3root@nginx-tcp-liveness-probe:/# rm -f /usr/share/nginx/html/test.html

4

5查看pod健康检查event日志

6[root@node-1 demo]# kubectl get pods nginx-tcp-liveness-probe

7NAME READY STATUS RESTARTS AGE

8nginx-tcp-liveness-probe 0/1 Running 0 11m

9[root@node-1 demo]# kubectl describe pods nginx-tcp-liveness-probe | tail

10 node.kubernetes.io/unreachable:NoExecute for 300s

11Events:

12 Type Reason Age From Message

13 ---- ------ ---- ---- -------

14 Normal Scheduled 12m default-scheduler Successfully assigned default/nginx-tcp-liveness-probe to node-3

15 Normal Pulling 12m kubelet, node-3 Pulling image "nginx:latest"

16 Normal Pulled 11m kubelet, node-3 Successfully pulled image "nginx:latest"

17 Normal Created 11m kubelet, node-3 Created container nginx-tcp-liveness-probe

18 Normal Started 11m kubelet, node-3 Started container nginx-tcp-liveness-probe

19 Warning Unhealthy 119s (x32 over 11m) kubelet, node-3 Readiness probe failed: HTTP probe failed with statuscode: 404

20

21查看endpoints

22[root@node-1 demo]# kubectl describe endpoints nginx-service

23Name: nginx-service

24Namespace: default

25Labels: app=nginx

26Annotations: endpoints.kubernetes.io/last-change-trigger-time: 2019-09-30T14:38:01Z

27Subsets:

28 Addresses: <none>

29 NotReadyAddresses: 10.244.2.22 #健康检查异常,此时加入到NotReady状态

30 Ports:

31 Name Port Protocol

32 ---- ---- --------

33 http 80 TCP

34

35Events: <none>

36

37查看service状态,此时endpoints为空

38[root@node-1 demo]# kubectl describe services nginx-service

39Name: nginx-service

40Namespace: default

41Labels: app=nginx

42Annotations: kubectl.kubernetes.io/last-applied-configuration:

43 {"apiVersion":"v1","kind":"Service","metadata":{"annotations":{},"labels":{"app":"nginx"},"name":"nginx-service","namespace":"default"},"s...

44Selector: app=nginx

45Type: ClusterIP

46IP: 10.110.54.40

47Port: http 80/TCP

48TargetPort: 80/TCP

49Endpoints: #为空

50Session Affinity: None

51Events: <none>

1.6 TKE设置健康检查 #

TKE中可以设定应用的健康检查机制,健康检查机制包含在不同的Workload中,可以通过模板生成健康监测机制,定义过程中可以选择高级选项,默认健康检查机制是关闭状态,包含前面介绍的两种探针:存活探针livenessProbe和就绪探针readinessProbe,根据需要分别开启

TKE健康检查

开启探针之后进入设置健康检查,支持上述介绍的三种方法:执行命令检查、TCP端口检查,HTTP请求检查

TKE健康检查方法

选择不同的检查方法填写不同的参数即可,如启动间隔,检查间隔,响应超时,等参数,以HTTP请求检查方法为例:

TKE http健康检查方法

设置完成后创建workload时候会自动生成yaml文件,以刚创建的deployment为例,生成健康检查yaml文件内容如下:

1apiVersion: apps/v1beta2

2kind: Deployment

3metadata:

4 annotations:

5 deployment.kubernetes.io/revision: "1"

6 description: tke-health-check-demo

7 creationTimestamp: "2019-09-30T12:28:42Z"

8 generation: 1

9 labels:

10 k8s-app: tke-health-check-demo

11 qcloud-app: tke-health-check-demo

12 name: tke-health-check-demo

13 namespace: default

14 resourceVersion: "2060365354"

15 selfLink: /apis/apps/v1beta2/namespaces/default/deployments/tke-health-check-demo

16 uid: d6cf1f25-e37d-11e9-87fd-567eb17a3218

17spec:

18 minReadySeconds: 10

19 progressDeadlineSeconds: 600

20 replicas: 1

21 revisionHistoryLimit: 10

22 selector:

23 matchLabels:

24 k8s-app: tke-health-check-demo

25 qcloud-app: tke-health-check-demo

26 strategy:

27 rollingUpdate:

28 maxSurge: 1

29 maxUnavailable: 0

30 type: RollingUpdate

31 template:

32 metadata:

33 creationTimestamp: null

34 labels:

35 k8s-app: tke-health-check-demo

36 qcloud-app: tke-health-check-demo

37 spec:

38 containers:

39 - image: nginx:latest

40 imagePullPolicy: Always

41 livenessProbe: #通过模板生成的健康检查机制

42 failureThreshold: 1

43 httpGet:

44 path: /

45 port: 80

46 scheme: HTTP

47 periodSeconds: 3

48 successThreshold: 1

49 timeoutSeconds: 2

50 name: tke-health-check-demo

51 resources:

52 limits:

53 cpu: 500m

54 memory: 1Gi

55 requests:

56 cpu: 250m

57 memory: 256Mi

58 securityContext:

59 privileged: false

60 procMount: Default

61 terminationMessagePath: /dev/termination-log

62 terminationMessagePolicy: File

63 dnsPolicy: ClusterFirst

64 imagePullSecrets:

65 - name: qcloudregistrykey

66 - name: tencenthubkey

67 restartPolicy: Always

68 schedulerName: default-scheduler

69 securityContext: {}

70 terminationGracePeriodSeconds: 30

写在最后 #

本章介绍kubernetes中健康检查两种Probe:livenessProbe和readinessProbe,livenessProbe主要用于存活检查,检查容器内部运行状态,readiness主要用于就绪检查,是否可以接受流量,通常需要和service的endpoints结合,当就绪准备妥当时加入到endpoints中,当就绪异常时从endpoints中删除,从而实现了services的健康检查和服务探测机制。对于Probe机制提供了三种检测的方法,分别适用于不同的场景:1. exec命令行,通过命令或shell实现健康检查,2. tcpSocket通过TCP协议探测端口,建立tcp连接,3. httpGet通过建立http请求探测,读者可多实操掌握其用法。

附录 #

健康检查:https://kubernetes.io/docs/tasks/configure-pod-container/configure-liveness-readiness-startup-probes/

TKE健康检查设置方法:https://cloud.tencent.com/document/product/457/32815

『 转载 』该文章来源于网络,侵删。